Forevery - An iOS App For Searching Your Photo Library

Our portfolio company Clarifai, which offers a visual recognition API to developers so they can understand the images and videos on their service, has released an iOS app called Forevery which allows you to search your iPhone photo library.

If you’ve ever found yourself swiping down and down and down on your iPhone trying to find a photo to show to your friend, then Forevery is for you. It is one of those things when you first see it in action you think its magic.

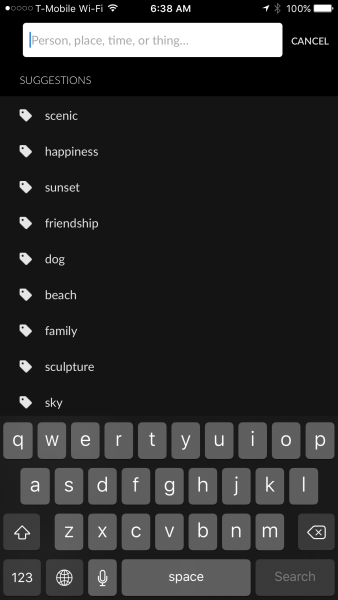

Here’s the screen you get when you open the app:

When you type the into the search field, you get this:

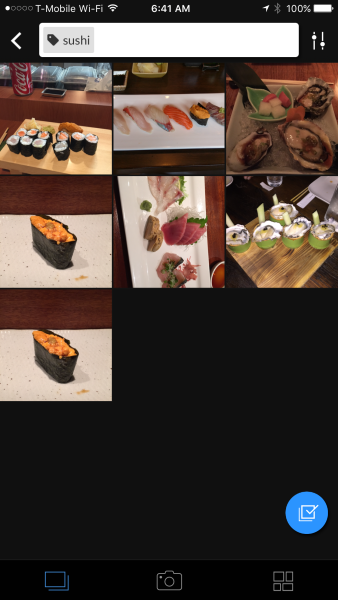

I typed sushi and got these results:

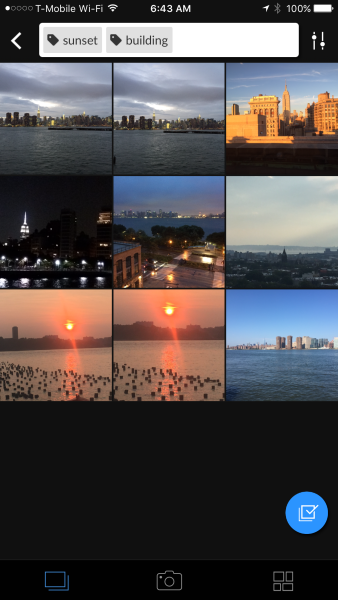

I was looking for a sunset photo of the Flatiron building from my office so I typed “sunset building” and got these results. The photo I was searching for is the third one.

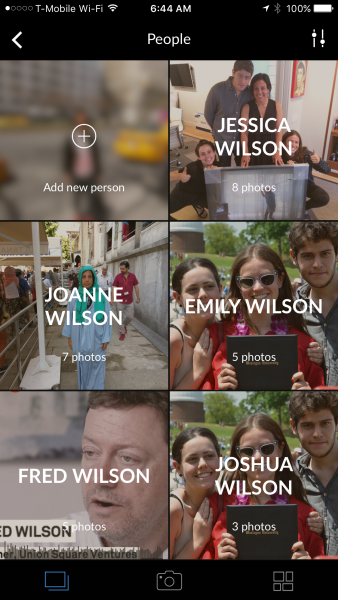

But maybe the most amazing thing about Forevery is you can train it to recognize people and things. I’ve trained it to recognize these people in my photos:

So that’s a quick run through of Forevery. If you want to get it on your iPhone, you can download it here.

Comments (Archived):

Google also recently released an API for helping to understand images -> http://googlecloudplatform….As this technology becomes easier, and more mainstream, I think we are going to see a large increase 1st gen. A.I. (or at least what appears to be A.I. — or at the very least, magical — to the average person).Exciting times continue to be just around the corner!

Class Assignment.Compare and contrast 2 USV partners posts on the same product launch.http://continuations.com/po…For starters. Fred takes a UX perspective – search ‘sunset’. Albert more a machine learning one – search ‘love’

Maybe the thing I like most about the USV team is the way we compliment each other

Really slick. Kind of a magical experience in a way. Like “Wow, how’d they do that” which is a great feeling to have with an app. The onboarding was nicely done, too. Personal and warm and pretty accurate right out of the gate. Kudos to the team.

Love that building

i wonder if there was ever a list of provisional names?”The Wedge” “The Toblerone”

were the photos tagged (description, location, et.c.) prior to using Forevery to find them?

No

then it’s good magic.

The tagging happens like so…The location is straightforward enough if the person taking photo has enabled “Location services” on their phones. The photo can get auto geo-tagged in that way.

a smart device could be geo tagged with the angle of the photo and the time of the day. add the Sun’s know path across the sky at that location and “sunrise” and “sunset” photos may be identified with some accuracy. add weather forecast and tall buildings mapping to predict brilliance.

Was thinking the same thing. Suggest also knowledge related to beard stubble on face.

powerful tech indeed. could be used for good or evil, depending on who controls it. keep it away from people like Donald.

Donald is fighting a noble fight at great personal risk to himself actually. Thanks for the mention that allows me to offer a political message.Here is how absurd this PC stuff has become. We can’t use the word “master” anymore.The tenured, full-time professors who live in student dorms will now be called faculty directors. They provide academic and personal support to residents.Over the last two weeks, Princeton and Harvard Universities also have agreed to change the titles; Yale University students and faculty are debating whether to follow suit. Supporters of the change argue that master connotes a legacy of slavery.http://www.philly.com/phill…

the tech is almost too powerful to be in the hands of any individual or group. put it on the blockchain with even more powerful encryption protection.his personal wealth gives Donald the freedom to express his opinions. other candidates need to check first with their paymasters before speaking. am i allowed to say ‘paymasters’?wealth = power/ influence = responsibility?

Completely. There are specific feature layers for shadows, tones and angular shifts in light difference.@domainregistry:disqus – hair, stubble etc was/is challenging. The feature contrasts between skin vs. synthetic material (clothing, metal of cars etc) is getting better in the algorithms.

Isn’t this something Google photos is already doing?

Yes, but not everyone is using Google Photos.

yes, via their new open source library

The market for this is as big as there are people with digital photos.Gonna have to work almost perfectly.Really cool.

Well.. the market is a bit smaller I think. I’d say the dominant paradigm is that people take a lot of photos on their phone, and upload the best ones to Instagram, Facebook, wherever…. so those platform is the go-to for their top photos. The camera roll becomes that shoebox in the closet that has all those developed photos that didn’t make it into an album or frame. Wow, i feel old now.

Yes you are correct that today we curate and post.But I stand by my market slice cause the win here is not to sit on top of the selected posted ones but to sit on top of all of the ones we have. We only select what we can find.This wins by changing the paradigm is my sense.

This is a big problem for me. Excited to try.

Oh goody! Just yesterday I was reading through some presentations from NIPS (Neural Information Processing Systems) conference.Visual recognition (via Deep Learning) has been researched by academics for about 40 years and it’s now productized.

By the way, Yann LeCun who did these slides is the NYU professor of AI who now heads up Facebook’s AI unit so the Clarifai team likely studied with him?

Yup. At least 3 of the major research engineers published with him. The CEO/main founder is definitely his student as well One of the other research engineers also specializes in convolutional nets. It’s pretty clear they are using recursive nets.and…I need to yell at some people….

Thanks Fred! This app has been awesome to work on and even more awesome to use every day!

When Android?

This sounds great can’t wait to test it out.

There’s some magic behind the scenes that’s very satisfying to a user. When is the Android version coming out?

I’d be interested to know if the ‘processing’ happens on the device or in the cloud somewhere. My privacy alarm bells go off when I think that my personal and unshared photos are being sent somewhere for image recognition.

It seems that it is exactly what it does, and the privacy statement available on the App Store says so, including the sharing of some personal information with ‘trusted partners’ related with analytics.I could not read or link to the privacy statement from the app itself (!) . I have very sensitive privacy alarms too and really like to have control of what goes to the cloud. Are we being paranoid or just getting too old?

Good find. Well no thanks then. We aren’t paranoid, just niche privacy enthusiasts.As for being old… I dunno. My teenager makes a huge stink about privacy when I ask to see his phone. Maybe there is hope for his generation yet.

24yo here. I think this is a cool app. Not downloading it because of privacy concerns either. That said, I think it’s probably futile, as I share more information about myself than I’m aware on a daily basis.

Cute pic of the fam.

In AI, 50% of Da Vinci’s genius “All knowledge has its origins in our perceptions” is close to being solved. The other 50%, though, requires tools invention.’Vitruvian Man’ has been my favorite work of art since childhood. Where did I go soon as I was old to travel by myself?The Accademia in Venice with my sketchpad.Hey and check out the shape of Venice (the Serene Republic). Yup, the coupling of 2 hands … like Yin+Yang.LOL.

Recent ITP theory (Interface theory of perception -University of California)) is validating Leonardo’s statement. In our research we have seen a paradox as to why AI , Big Data and Natural Language ( all linked ) are failing at the final hurdle due to an incorrect foundation design !!! Our M3 CLIC – Cognitive linguistic intelligent catalyst joins the results of perception @m3ip:disqus

So… I’d worked out why AI, Big Data and NLP are all failing back in 2009. Long before Amit Singhal of Google said, “Meaning is something that has eluded Computer Science” in Nov 2015.The problem with ALL of the foundation design by Google, Facebook, IBM Watson, Baidu et al (and this includes academic institutions) is the “Probability Paradox” which also exists in Quantum Theory.They’re all built from the “Knowledge is a cube (logic box)” view. Therein, Probability is expedient as a tool for measuring vector correlations.However, if the view is “Knowledge is a sphere”… different tools need to be invented.

There are two parts to what Da Vinci meant by “All our knowledge has its origins in our perceptions.”(1.) The objective and empirically measurable variables of optics => visual recognition by logic, mechanics and probability.(2.) The subjective and (as yet not coherently) measurable variables of opinions => language recognition. The “Holy Grail” algorithm everyone in AI s seeking.The incorrect foundational design is in Mathematics as a language itself.It’s fixable. I know this because … I FIXED IT by inventing a “twin tool to Probability” and kicking Descartes back to the Dark Ages where he belongs.Plus my system solves an area of Quantum Physics that Max Tegmark of MIT hasn’t been able to produce a suitable equation for: his proposal for the existence of “Perceptronium, the most general substance that can feel subjectively self-aware.”Clearly, that has implications for Quantum Computing since computing is always borrowing from its parent sciences (Maths, Physics, Biology, Chemistry).Well, I wrote my little Quantum equation in computer code form years before Max Tegmark published his theory in Jan 2014.So … I’ve known the foundation design for AI, Big Data and NLP is wrong for a really long time.And I decided to INVENT by standing on the shoulders of Da Vinci and others and integrating everything I’d ever learnt to do so.@wmoug:disqus

paper?

What’s the link to M3 CLIC research, thanks.

Incorrect foundation design.

By first principles, I pinpointed the incorrect foundation design down to Descartes. His philosophy is the source of the paradoxes and why we went down the paths whereby AI, Big Data and Natural Language are failing at the final hurdle and the hurdles before then.Is it possible to resolve the “Probability Paradox” that Einstein, Schrodinger, Von Neumann, Daniel Kahneman have all referenced in one way or another?Indeedy, it is.

I must confess ignorance as to which paradox in probability you’re referring to – though the most likely (pardon the pun) seems to me like it’d be the framing one, where “uniform” distributions become rather less so depending on which way you look at them.As for Descartes, “I think, therefore I am” is true so far as it goes, but its non-vacuous conclusions rely on a lot of supporting assumptions in order to avoid things like “I think, therefore I am a tape recorder on repeat saying ‘I think, therefore…'”But that’s just surface-level reactions; despite my background I haven’t actually explored Descartes’ positions in detail.

The time line for Perception started with Primitive Man when it was used for survival . This process in the reptilian brain has to be included in the current processes for AI ,Big Data,Image recognition, natural language and intelligence . This is the paradox that needs to be solved . Russell and Whitehead — child process cannot solve a mother process — an error in logical typing . Tweet me for the research discussion

Replace “primitive man” with “primitive bacteria” in your first sentence and I’d agree. (What are cilia if not a way of perceiving and interacting with one’s environment?) Perception of some sort is arguably linked to just about anything we recognize as life, however myopic it may be.

” THE PROCESS / THE PATTERN ” connects all nature ( the bacteria and primitive man — various stages in the timeline ) the process to modify living systems were subject to stochastic evolutionary processes – mutation of DNA

Re Gregory Bateson (Mind & Nature), reptilian brains and “quant thinking cannot analyze complex non-linear processes found in nature”, here’s my mesh of John Von Neumann (a father of Modern Computing) with Simon Sinek.@wmoug:disqus – did we decide on “mesh” rather than braided?

I wonder which ring does WTF belong to. Maybe to all three: W..neocortex, T.. Limbic and F, definitely reptilian. A cascade chain reaction or quantum system failure. 🙂

Haha, brilliant comment.Here’s how Google applies their theory of how the cortex works to AI and natural language understanding.Cascade chain reaction from amiss assumptions and quantum system failure indeed.

That is precisely it !!! We have found a mathematical innovation / algorithmic / cybernetic process which makes the non-linear processes of nature into linear feedback in time which led to human survival with quick feedback.We have taken Bateson’s mental characteristics and created a workable epistemological tool !!Do tweet @m3ip so that we can discuss offline on skype? I am having the same problems during fundraising / VC’s in getting them to understand the discovery and maintain control over m3’s IP.Your photographs were an ideal example of the drawbacks of current image recognition.ITP has just been formulated – we have been using a similar basis for more than 10 years.Let’s talk offline.

You may be having problems fundraising but we’re not in the same situation at all.You’re trying to raise $40 million seed for a DOS-based MVP which you’ve been working on for 10+ years based on Bateson’s work. It sounds like an academic research project rather than a commercial solution.I built a commercial solution from the start.

The reason for using DOS was to demonstrate that the foundation design did not rely on memory or processing power. Hence, the core engine is not a trivial design. It reduces datacentre capex by 25% because of the efficiency of the design. I regret that we cannot continue this conversation unless I have a clue as to who I’m talking to. Best of luck with your commercial solution. Best, @m3ip@m3ip:disqus

The language of the nervous system is not mathematical or in Von Neumann / Weiner’s format. The Cortex is non linear and we have to merge the reptilian part with the later parts of the brain which shows up in MRI scans ….hence the failure in design. After all in the design to FLY we invented / observed Lift and Thrust and created a tool to fly without mimicking the non linearity of the bird’s wing or muscles.

Re USC Irvine ITP, I’ve now read Hoffman’s paper and there are a number of flaws in his assumptions and his model.However, there is one paragraph which points to how Descartes contributed to the mechanistic logic of today’s Machine Intelligence.”Descartes similarly argued “it must certainly be concluded regarding those things which, in external objects, we call by the names of light, color, odor, taste, sound, heat, cold, and of other tactile qualities … ; that we are not aware of their being anything other than various arrangements of the size, figure, and motions of the parts of these objects which make it possible for our nerves to move in various ways, and to excite in our soul all the various feelingswhich they produce there” (1644/1647/1984, p. 282).Size, figure and motion are exactly how visual recognition works.

Here’s size, figure and motion in visual recognition.

I just had a professional security system installed which has on the NVR and some minor abilities to do recognition but nothing that comes anywhere close to this. It would be great if software like this was built into the box (I think it almost certainly runs on Linux). Tremendous opportunity here unfortunately not Clairifai’s market. You could train it to recognize when certain customers walked through the door or even when certain employees were in certain rooms and so on. I am guessing (w/o checking) that this already exists but probably is quite expensive. Could use it to track all sorts of activities.Right now using this app you could actually hack together something that I described above by simply getting the photos over to an iphone from whatever capture device you were using (or simply setting up iphones (I did call it a hack).

Invest in haberdasheries now!

Installing it now, it requires “your mobile number” to sign in. I am wondering why it needs that? [1]Reason appears to be so it can send you a verification code but still doesn’t explain why it even needs to do that.I’m still waiting for the sending of the verification code to complete.Update: It failed two times to accept the verification code that it sent me. The code I am inputting is correct, there appears to be a problem here. Two tries, no success.[1] There should be an explanation on the page that is asking for the phone number.

The apps in general are over-reaching. They pretty much ask for access to everything. I just had 39 updates and took screenshots of what they wanted. And we have no real choice or say in the matter. Not sustainable IMO.

We ask for your mobile number so you can share photos with other people. We’ve got some pretty sweet magic going on with how we recommend who to share photos with. Very sorry the code isn’t working for you. Can you kill the app, wait a few mins and try again?

You recommend ‘who’ to share photos with? Thats… interesting…

Yes! Let me know how it works for you.

will this be working with Cyanogen OS when your Android version comes out? i ask because i might want to have more control over permissions. is that even possible?

Can’t say for certain but we are big fans of Cyanogen.

Nope cigar != 1.

lame

Got it to work finally. Realize that you mean “kill the app” which means restart it by double clicking on the home button and swiping the app closed. Most people don’t know that even exists.

Yeah, sorry about that 🙂

Suggest: “Share photos with others by giving us your phone number. (You can always add this later or remove later…)”Very clear people will get hung up on this. No reason to have it hard coded in the app startup steps.

Very early in iOS evolution, developers were impeded by Apple to get an unique identifier from the phone, so you have to generete a new ID every time the app was installed. The idea was to assure the person using the phone that she was not tracked between the usage of different apps. Asking upfront for the phone number and then validating it by means of a SMS message, quite breaks the principle but not the law, because it is very probable that the phone number will never change, it can be tracked even when changing to a new phone.Companies should explain pristinely clear in their user licenses about how they use this identifiying information. If you look at typical user licenses, they are often very vague and leave plenty of untied knots in this area.Trust is something that should be earned and not given away blindly and freely.

Is there a way to tell the OS why you’re asking for specific permissions so that it displays the reasons for each one to the end user? It seems like this would be a good feature to use, if it exists, to reassure more privacy-conscious users.

I can only grin at how to recommend 😀

Great app! Like the other commenter, I wonder why ser up requires my phone number?

So you can share photos with others.

FWIW, I backed out of the app as soon as it asked for my phone number and won’t use it if that’s required.

Cool app, but everytime I try to plug in my access code it shuts down my phone. Still buggy?

Well, 4th time was the charm. I am in.

IPhone smay phone. Android has more people which means if you want to reach significant scale you target that population.

This comment made me chuckle. The iPhone has 100mm US users alone. I think that’s a pretty significant scale. As long as the app comes to both platforms eventually, isn’t it kind of irrelevant where it starts?

Ryan Frew:“Everyone is entitled to his own opinion, but not to his own facts.”― Daniel Patrick Moynihan_______________________________DEFINITION of ‘Scalability’A characteristic of a system, model or function that describes its capability to cope and perform under an increased or expanding workload. A system that scales well will be able to maintain or even increase its level of performance or efficiency when tested by larger operational demands.________________________________IDC (SOURCE)Android dominated the smartphone market with a share of 82.8%. Samsung, the #1 contributor, had lower volumes QoQ and YoY. This comes in the midst of an underwhelming performance by its flagship releases, Galaxy S6 and S6 Edge. However, the Android share has seen a rise compared to 2015Q1, with strong growth in unit shipments by other players such as Huawei, Xiaomi and ZTE.iOS saw its market share for 2015Q2 decline by 22.3% QoQ with 47.5 million shipments. Despite the seasonal decline, Apple enjoyed success thanks to consumers’ insatiable appetite for the larger screened iOS devices. The popularity of the iPhone 6 Plus continued in many key markets including China, where the overall smartphone market saw a revival in growth by 6.7%.Windows Phone experienced a QoQ decline of 4.2% with a total of 8.8 million units shipped this quarter. Since its acquisition of Nokia in 2014, Microsoft has been revamping the product portfolio with Microsoft branded Lumia devices. But now that Microsoft has decided to take a loss on its Nokia purchase, the scenario for Windows Phone looks bleaker. Acer is a new entry into the top five in this segment. Most other vendors took a beating in shipments QoQ, with the exception of Samsung, which showed an 8.5% increase with its ATIV range of phones.Blackberry OS, which saw a small increase in some regions, continued to decline in growth globally. The bulk of its volume shipments came from the Blackberry Classic.______________________________PS: Excuse our late response.

No one will disagree that Android has a larger market share. You’re right – that’s a fact. The issue, though, is that you’re saying an App should start with Android (Play), solely because it’s a larger market, when in fact there are multiple factors to consider. iPhone has a different demographic, which may be a better fit for the App. It’s also easier to develop and manage quality with Apple because there are so fewer devices to worry about.Here’s a decent example: This is a photo-related app, right? Do you know what other photo app came to iOS 18 MONTHS before hitting Android? Instagram. And I’d say they’re doing alright, in spite, or perhaps even because, they didn’t start with Google Play.

Ryan Frew:tsk tsk!Now do we list five successful apps that began on Android before iOS?No, because depending upon the app and intended market the developers make the call. The developers receive two to one more compensation when developing the iOS app. So the incentive is for a developer to develop in iOS.No response is required on this subject just hit the game winning basket game over. We will see you at the next game.

Yes!! Just what I needed

Someone should develop a crowd sourced website where, for fun and prizes, you get to try and critique new free apps and provide feedback, similar to what is being done here. The apps would pay a fee to be featured, the feedback would be free with some payback (or karma) for giving good advice. Something like that. One app per day.Name suggestion: critmyapp.com

ProductHunt is pretty close to that – https://www.producthunt.com…

Very cool but how is this different than Google Photos?

You really need to install it to see, but here are a few reasons -1. You can teach Forevery any custom thing you want. This goes way beyond what others are doing today. It means instead of ‘dog’, you can actually teach it your dog ‘Milo’ and then it will recognize Milo every time you take a picture of her.2. Sharing. When you want to share photos, Forevery recommends who to share them with. This goes way beyond ‘Jill is in this photo, share it with her’. Imagine stuff like ‘You daughter is in this photo, share it with your wife’, ‘The Giants are in this photo, share it with your 3 buddies’.3. Search. Forevery allows you to combine searches “Jill, Sushi, New York City”. Try doing that with other photo apps!

I don’t have an iphone, android only so cant check myself. Does this store your photos or just layer on top of your phone’s photos? Google Photos is limited compared to this (as you describe), but it has indexed all 67,000 photos and videos I have stored in the cloud.

It’s currently only for your Camera Roll.

I definitely think that facial and object recognition is the way of the future with photos, and probably in analogous ways with video, audio, and all other non-written media. It’s extremely useful to be able to look up pictures without having to manually search or manually tag them to a certain location, person, type. etc. But I always wonder how products like this will fare as the native platforms (Android, iOS) develop this functionality in-house and integrate it deep into the phone operating system. Google Photos already does a great job at all of this, and its integration with Maps, Google+, Gmail, is helpful. I think iOS has started to roll out similar functionality. Thoughts?

I agree with what you say in the first paragraph. This is how AI is coming to know us, and when it get over its fixation with advertising, then it will become a fundamental piece of our relationship with machines and technology.In the case of this particular product, Clarifai is showcasing the advanced technology they have developed and that is available to anyone who sign to use their API. They may very well be ahead of Google and Apple in their area of expertise. Apples’ AI manifestation, Siri, was an acquisition, who knows what are Clarifai intentions.

Apple already acquired Perceptio:* http://appleinsider.com/art…

Interesting, so Siri will get eyes too. Academic credentials and experience of both Clarify and Perceptio teams is impressive. Is CS getting harder to tackle?

yes. There are a ton of core math problems at hand to be solved

@lawrencebrass:disqus – Core math problems of this magnitude.Between them Google’s founders, Geoff Hinton, Peter Norvig, Ray Kurzweil, Amit Singhal, Jeff Dean and their thousands of engineers and special relationship with Stanford and Oxford University can pretty much resource whatever Mathematical tools and Quantum computers exist to solve narrow AI and process data correlations at speeds faster than everyone else.Ditto LeCun at Facebook, Andrew Ng at Baidu, the Apple AI and IBM Watson team.However for General Intelligence AI & Natural Language … Well …Someone would need to create a Quantum maths model and be able to code it into a simple system that all 7+ billion people could potentially use, :*).FUN problem to solve, si?

Maybe there should be a split generalized intilligence and natural language

Natural Intelligence is a part of Generalized Intelligence.For a long time, the argument was made there was no need for us to understand how our minds work (memory, consciousness, emotions of embodied experiences etc) because the maths we’ve inherited is just about “good enough” for us to model externalities —– such as the optics involved in visual recognition — and consciousness is too complex for us to try to model mathematically.However, as Google is discovering …. the mathematics they can do is not enough. That’s why “Meaning is something that has eluded computer science” as their SVP of Search says.The meaning in language is inherently bound to our consciousness.This occurred to me when I was talking to my Dad as he lay in his coma.As I wrote elsewhere, I made it my business to learn about the signal:noise inside my Dad’s brain. The neurosurgeons ended up apologizing to us in coroner’s court because I proved that their models of consciousness and how to measure for it were wrong.My Dad had lost the faculty of visual perception, speech and his other motor functions. Yet he could perceive and understand us.That’s how I knew Descartes was wrong and once I discovered this, well …I set off on a mission to invent and build some systems. To “Do and make something meaningful with my life.”

The most I can say is there is a reason I am saying what I am saying, and that it is not clear that speech/meaning is tied to consciousness as we know itIt is totally possible that language itself is probabilistic, but meaning is at least partially outside of language, which is why it is possible to gain emotional memories/behavior sets and have no realization of it because parts of the hippocampus are damaged.

Thanks, Shana. Is there specific academic research you’ve seen on this neuroscience?From my perspectives, language and probability are HUMAN-CREATED tools, with language being created before probability.Ergo, this logical argument follows: language (qualification & quantification) defined probability (quantification), and not vice versa.Google and others’ probabilistic basis is precisely why “Meaning has eluded computer science”.@lawrencebrass:disqus — THIS type of CS is harder. Going up against giants that have led us down the wrong paths for modeling human language, meaning & behavior and, therefore, economics.Imagine being in Galileo, Copernicus, Newton, Einstein’s etc shoes and reasoning that, “Earth is round with gravity and space has quantum relativity and new tools need to be invented to show and prove this” whilst most see through prisms of “Earth is flat” and a belief that probability is the panacea tool by which to model and codify everything.@fredwilson:disqus — Differences matter. Innovation happens when we see precisely where we disagree with others, so then we may decide to stand on the shoulders of other giants.Google et al chose Descartes & Bayes (C16-18th).I chose Da Vinci, Einstein and Yin+Yang (1000 BC). My big bet is that they’re more intelligent than Descartes and Bayes.

Wikipedia has a discussion about free will and neuroscience herehttps://en.wikipedia.org/wi…It a hugely research heavy area right now, partially because CS, neuroscience, and philosophy do not have matching definitions when it comes to terms like cognition or language, when we want to talk about their epistemology. It is becoming clearer that Mind-Body problem is definitely phrased incorrectly, and that experimental science is sound, but interpretation is a bit wonky because we are on the edge of a Kuhnian paradigm shift on even how to think about such problems.Either way, the evidence is strong enough that if I were to get into a severe enough accident, my fiance*, who I legally assigned as my medical proxy after he saved my life when we were dating, is instructed /allowed to do as much testing on my state of consciousness/brain being there before making decisions (with the caveat: to talk to one of my best friends who is also the second proxy if he declines for whatever the reason first)Specifically about language in CS: Can’t talk about it. See engaged things.*Guys, I’m old now, I got engaged over the summer. WE kept it quiet for family reasons on my side. But Yeah, I’m now officially old, and engaged.

Clarifai is all Yann Lecun people, so we know it is all convolutions

Thanks for the pointer Shana, I had read about Yann Lecun before but I was really unaware of his work and influence. Just read this, published Feb, 2015. Recommended.http://spectrum.ieee.org/au…quoted, from the linked article:”Hype and Things That Look Like Science but Are NotSpectrum: Hype is bad, sure, but why do you say it’s “dangerous”?LeCun: It sets expectations for funding agencies, the public, potential customers, start-ups and investors, such that they believe that we are on the cusp of building systems that are as powerful as the brain, when in fact we are very far from that. This could easily lead to another “winter cycle.””

Here’s a hierarchy of hardness and some timelines for how long it takes for academic theories to surface in commercial products.@fredwilson:disqus rightly wrote about the need for founders to source coaches. The challenges for AI startup founders in finding suitable coaches is factorially harder for this simple reason: there are lots of great ex-CEOs out there who’ve built & exited general techco’s.There are only about 10 ex-CEOs in the world who’ve successfully exited AI startups (e.g., Siri, DeepMind, Perceptio, Boston Dynamics) and would know how to coach the nextgen of AI innovators.

@wmoug:disqus – timelines in AI sector to put into context why some AI startups take 30+ years.@ShanaC:disqus – The choice is something like this: spend next 50 years as academic and publish lots of obscure research papers that someone else might then use as a basis to build a commercial system with …OR …Have idea. Design & code system. Release. Adapt & improve on-the-fly. Catalyze unique knowhow to accelerate timeline so idea=> implementation is less than 5 years.If I wait for Tegmark and the various other Quantum Physicists and neuroscientists to come up with suitable equations, I’d be dead before they managed to solve it:* https://www.youtube.com/wat…My Dad died following a coma so I made it my business to learn about data signal:noise in the brain and then I mapped those insights over to solve the signal: noise in the Global Brain that is the Web.

Idle musings… I wonder whether the name’s meant to be read as “forever-y” or “for-every”, or some combination of both; also how it would interpret a Dali or Picasso rendition (fun with edge cases).

Although it misses the mark at times, it is nonetheless a landmark moment, IMO, for what is to come. It does a lot of things right. And it is has really good UI/UX.Still, as impressive as it is, I would only use it if it were cleverly incorporated in the IOS search or in the Apple photo app; but it’s not wowing me enough as it is now to make me keep the app on my phone’s homepage for ready access. I’ve buried it in my ‘photography’ folder, which means it’ll very rarely be used, if ever. Having said that, I didn’t delete either, which is the fate that most apps I try typically receive.The bar is so high right now…Anyway, what is valuable is the underlying technology that the company has developed, and not whether this particular use case will gain wide adoption. — it serves as a great demo of what the technology can do.

It is integrated into iOS search!

Thanks for letting me know! I retract what I said about not wanting to use it. This makes the app a lot more valuable to me and I believe I will be drawn to be using it.Bravo, your team has done a real tour de force, IMO.

This is great – addresses a need that has existed for a while and so far, using it a bit, I am really impressed with how well it works. Correct me if I am wrong, but it can only search and analyze photos on the device itself, right? Is there a way to point it at a storage location like a dropbox folder or amazon photo storage service or icloud? Would be great to be able to search photos that have been moved off the phone and out of Camera Roll

Right now it’s only Camera Roll / iCloud. What service would you want?

What’s the monetization strategy? I’d prefer to know that before giving my data.

api resale on a mass level, but it needs training data probably

Wow, this is super cool.

#appdiscovery

🙂 Everyone is so happy in the pictures

I wonder if Adobe’s lightroom has something similar.

I’d like to see this on top of Google Photos.

This is huge. Learned about Clarafai from Andrew Pile while at Vimeo. Was waiting for the killer consumer iOS app. Shocked that Google/Apple hasn’t made an irresistible acquisition offer yet.

Can I export the tags? how do I run the api from my desktop? Will you ever support a model where I run the AI on my desktop?